Documentation Index

Fetch the complete documentation index at: https://docs.beam.cloud/llms.txt

Use this file to discover all available pages before exploring further.

Saving Models to a Beam Volume

You can mount a Volume in your app and save your TF models inside of it.

from beam import App, Runtime, Image, Volume

import tensorflow as tf

import tensorflow_hub as hub

beam_cache_path = "./models"

app = App(

name="tf-example",

runtime=Runtime(

cpu=1,

memory="4Gi",

image=Image(

python_version="python3.8",

python_packages=["tensorflow_hub", "tensorflow"],

),

),

volumes=[Volume(path=beam_cache_path, name="models")],

)

@app.rest_api()

def save_model():

model = hub.load("https://tfhub.dev/google/universal-sentence-encoder/4")

# Save models to Beam Volume

tf.saved_model.save(model, beam_cache_path)

Loading Models from a Beam Volume

You can easily read a file saved in the volume by passing in the path to the volume using the hub.load() method:

@app.rest_api()

def load_saved_model():

# Load the model from the Beam Volume

loaded_model = hub.load(beam_cache_path)

# Run the saved model

embeddings = loaded_model(["This is an example sentence."])

# Print model output

print(embeddings)

Deploying Tensorflow Models

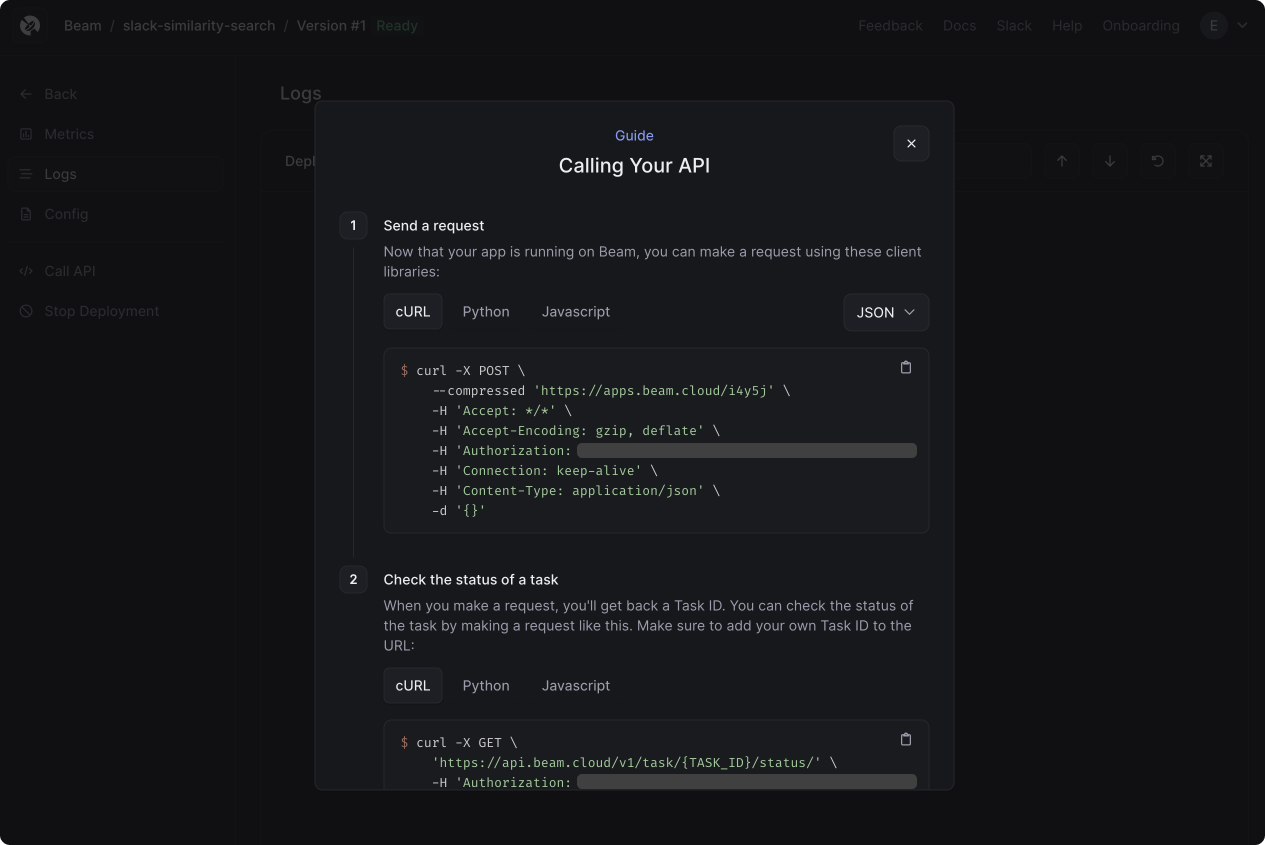

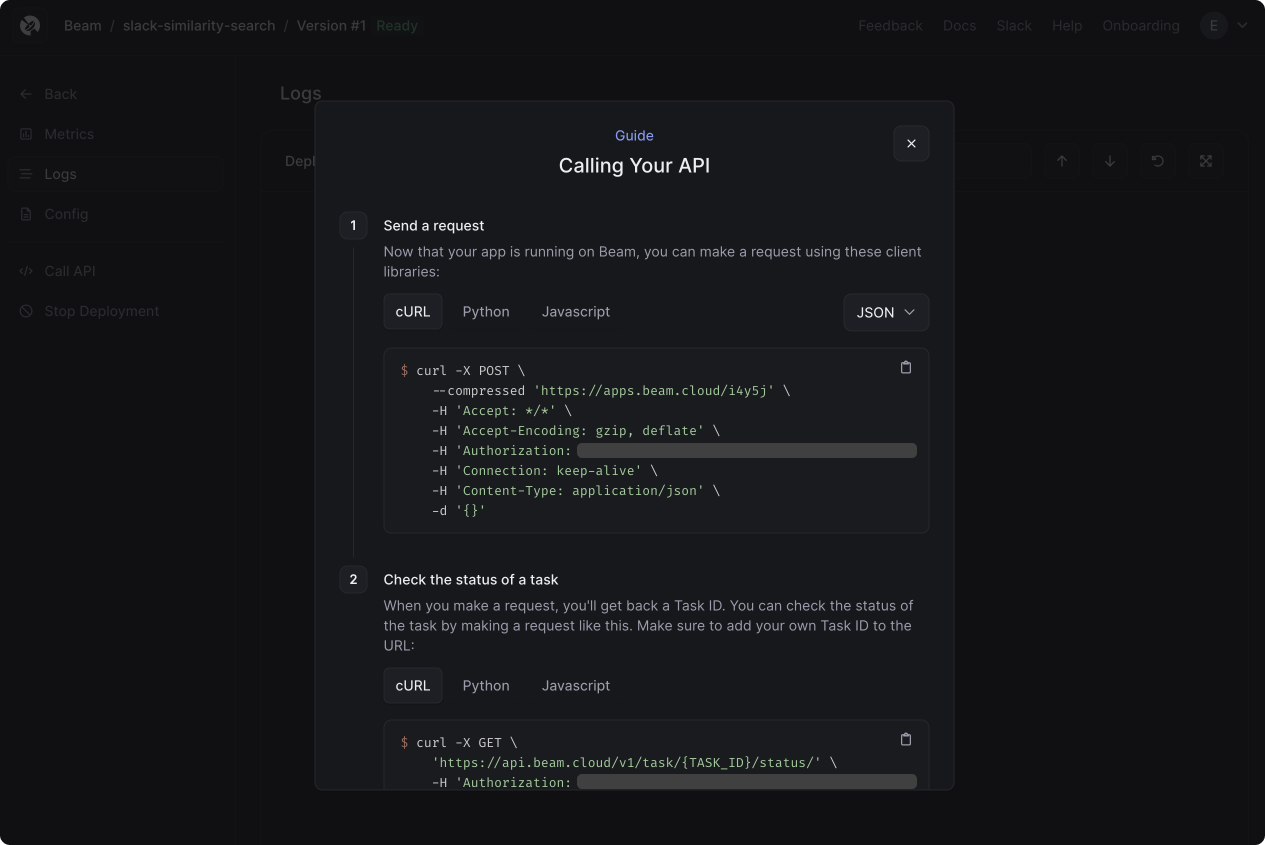

You can deploy your code as a REST API, Task Queue, or Scheduled Job using the beam deploy command:

beam deploy app.py:your-function-name

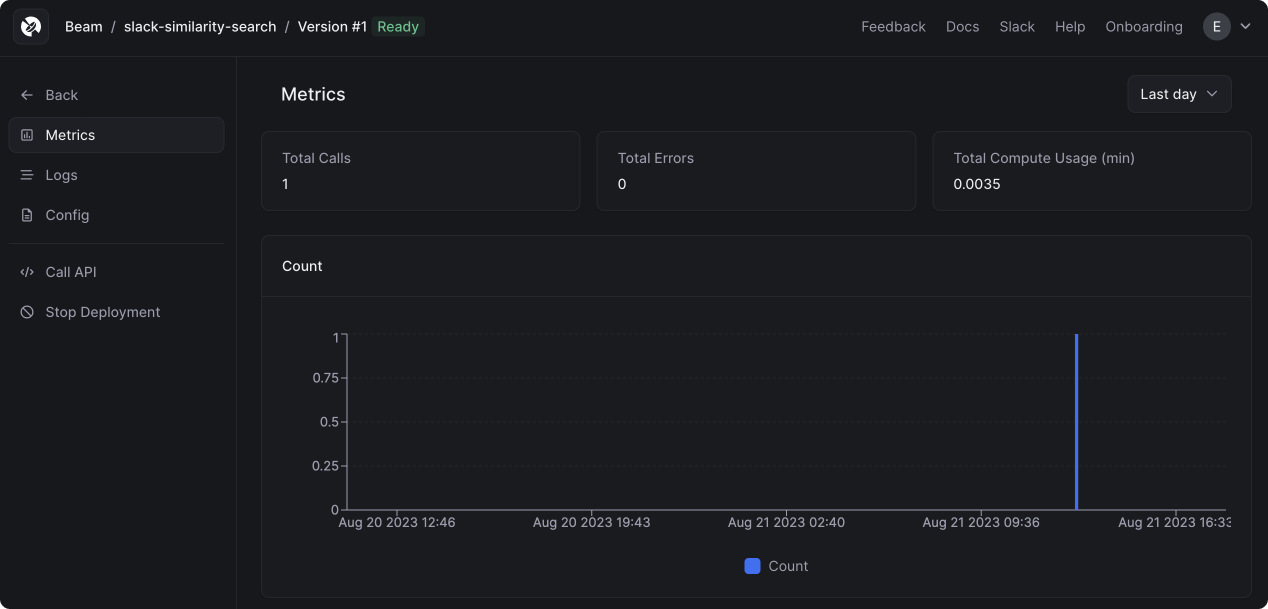

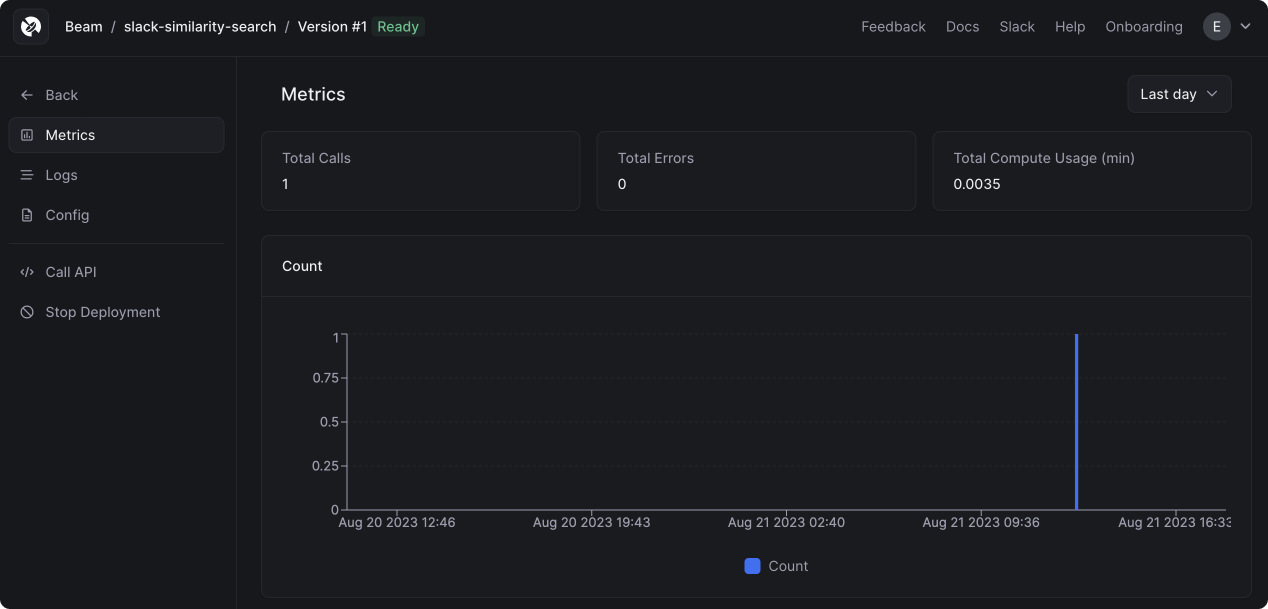

After making a request, you’ll see metrics appear in the dashboard:

After making a request, you’ll see metrics appear in the dashboard: